As part of recent work to improve the performance of emoncms because of high load's on emoncms.org there is now an emoncms branch that uses redis to store feed and input meta data including last feed time and value fields which where causing significant write load on the server.

Using redis in this way leads to quite a big performance improvement and potentially could lengthen the lifespan of raspberrypi SD card systems significantly.

I've been working on the redis implementation with Ynyr Edwards a good friend and an experienced software developer who recently joined Glyn and I helping us with development and running the shop. Ynyr had been telling me about the usefulness of in memory databases and caching for improving performance for long time. There appeared to be a significant amount of waiting on io going on on emoncms.org and the mysql processlist was full of last time and value updates to the feeds meta data table.

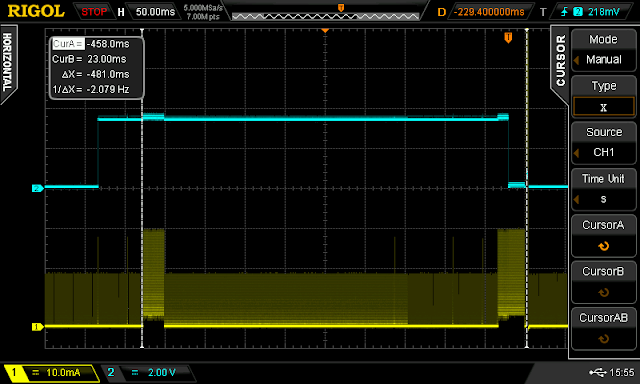

In order to compare the use of mysql versus redis for storing input and feed meta data in emoncms a test was created that was representative of the typical kind of data input seen in emoncms.

The test consisted of a node posting 3 power values, with each value being “logged to a feed” processed into kwh/d data and histogram data. So 9 feeds in total, three of them timestore and 6 mysql based.

The node post rate was set to once a second and the time taken for each request was measured. After single request time's where measured a second test was carried out which involved sending request continuously and measuring the time taken to make 100 sequential requests from which the average requests per second value is determined.

Its important to note that the following results are for

sequential requests rather than concurrent requests. Its possible to achieve significantly higher request rates with concurrent requests which spawn many parallel apache processes. Sequantial requests give us a good base line test to work with.

The CPU on the test machine was set to 2.0 GHz x 4 cores

Testing Mysql

The following results show the effect of turing off last input and feed time and value meta data entries in the pure mysql implementation:

Mysql with all metadata switched on:

10x sequential posts, 31ms to 79ms @ 4x 2GHz, average 20 sequential req/s.

With the processor set to 0.8GHz on all 4 cores the request rate was 10 sequential req/s

With a single poster process free-running the CPU is 7.4 us and wait is 11.5 wa

Mysql without input last time value being saved:

10x sequential, posts 26ms to 64ms, average: 25 sequential req/s

Mysql without input or feed last value but still histogram last value:

10x sequential posts: 18ms to 31ms, average: 46-48 req/s

Mysql without input or feed last value or histogram last value:

10x sequential posts: 10ms to 11ms, average 94-95 req/s

Redis

Here are the results with the redis implementation that's up on github here:

All meta data enabled 11-14ms, 94-95req/s @ 2.0GHz

CPU us 22us, 0.0wa

CPU Performance:

In the redis test the CPU utilisation was around 22%, the mysql CPU utilisation was around 7.4%. Idle CPU us is around 0.2%. Redis is however handling 4.7x the number of requests, if it where handling the same number of requests the cpu us may be around 22/4.7 ~4.7% us.

Wait

We can see a significant reduction in the amount of time spent by the system waiting. With mysql every time a feed was updated, the time and value of the update was written to the mysql feeds table. The first idea was that this waiting was caused by waiting on mysql table locks, testing however with both MYISAM and InnoDB showed similar overall performance even through InnoDB is row locking while MyIsam table level locking. Looking at the disk write rate with vmstat and iotop however showed a really high write rate with mysql so it may be that the waiting was just waiting because the disk was working so hard, see more on this below.

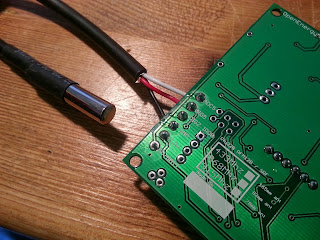

IO Disk write rate:

Here is the output from vmstat with the apache access and redis logs turned off.

Redis

procs -----------memory---------- ---swap-- -----io---- -system-- ----cpu----

r b swpd free buff cache si so bi bo in cs us sy id wa

2 0 0 4282700 352236 2002508 0 0 0 0 1670 44128 20 7 72 0

1 0 0 4281496 352236 2002976 0 0 0 43 1622 44148 20 7 73 0

1 0 0 4280636 352236 2003440 0 0 0 0 1651 44424 21 7 72 0

1 0 0 4280096 352236 2003952 0 0 0 1793 1678 43999 21 6 72 0

1 0 0 4279316 352236 2004416 0 0 0 49 1658 44106 20 7 73 0

MySQL

procs -----------memory---------- ---swap-- -----io---- -system-- ----cpu----

r b swpd free buff cache si so bi bo in cs us sy id wa

1 0 0 4264420 352244 2041008 0 0 0 4280 1695 14833 7 3 79 11

1 0 0 4264904 352248 2039972 0 0 0 4296 1777 15615 8 3 78 11

1 0 0 4264664 352252 2040312 0 0 0 4477 1713 15072 7 3 79 12

1 0 0 4264440 352264 2040420 0 0 0 4355 1700 14982 7 2 79 11

0 1 0 4267364 352284 2037604 0 0 0 4332 1712 15012 7 2 79 12

Using a larger number of vm readings than the 5 listed above the average redis input was 215kb/s and mysql 4430kb/s, idle being 4kb/s. First its surprising how high the mysql write rate is and then second its surprising how large the reduction in the amount of disk writing done is, about 21x less and that's with 4.7 times the post rate. The reduction in the amount of writing could therefore be as much as 100x. The quanitiy of writes cannot only be explained by the kind of writes that are being done in emoncms, most of it appears to be due to ext4 filesystem journaling (61.16% of write capacity) while mysql is only responsible for a couple of percent.

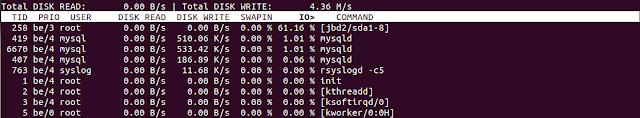

MySQL iotop

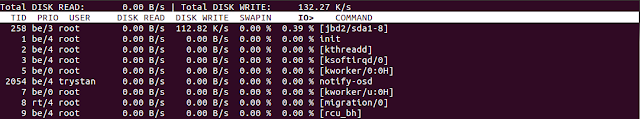

Redis iotop

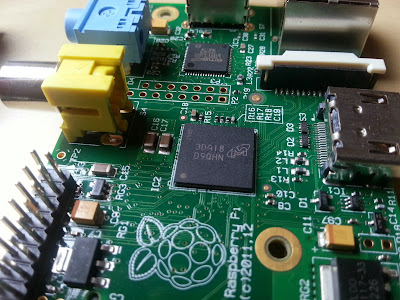

Journaling"A journaling file system is a file system that keeps track of the changes that will be made in a journal (usually a circular log in a dedicated area of the file system) before committing them to the main file system. In the event of a system crash or power failure, such file systems are quicker to bring back online and less likely to become corrupted" - http://en.wikipedia.org/wiki/Journaling_file_system. Raspberry PI SD card installation The problem with running emoncms on SD cards was that we where wearing the SD cards out in only a few months. If redis reduces the amount of writing by around 100x then this could mean a significantly longer SD card life span.

If in addition to using redis a filesystem without journaling is used the lifespan could be extended even further, although this does increase the risk of data corruption from power failure, but then if such failures are recoverable with a disk check on startup then that’s much better than a worn out SD card.

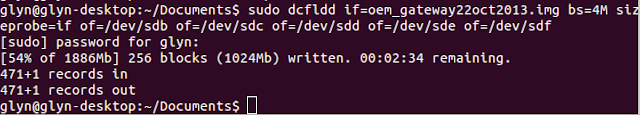

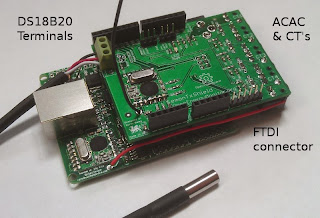

To potentially improve things further the IPE Debian operating system could be put on a read only partition as is currently done with the oem gateway and the data could be placed on a write partition which could use the ext2 or fat32 filesystem both of which are non-journaling.

Redis branch on github

If you'd like to try out the redis branch its up on github here:

You will need redis-server installed and phpredis: